forked from phoenix-oss/llama-stack-mirror

# What does this PR do? - Update eval doc to reflect latest changes - Closes https://github.com/meta-llama/llama-stack/issues/1441 ## Test Plan read [//]: # (## Documentation)

2 KiB

2 KiB

Evaluation Concepts

The Llama Stack Evaluation flow allows you to run evaluations on your GenAI application datasets or pre-registered benchmarks.

We introduce a set of APIs in Llama Stack for supporting running evaluations of LLM applications.

/datasetio+/datasetsAPI/scoring+/scoring_functionsAPI/eval+/benchmarksAPI

This guide goes over the sets of APIs and developer experience flow of using Llama Stack to run evaluations for different use cases. Checkout our Colab notebook on working examples with evaluations here.

Evaluation Concepts

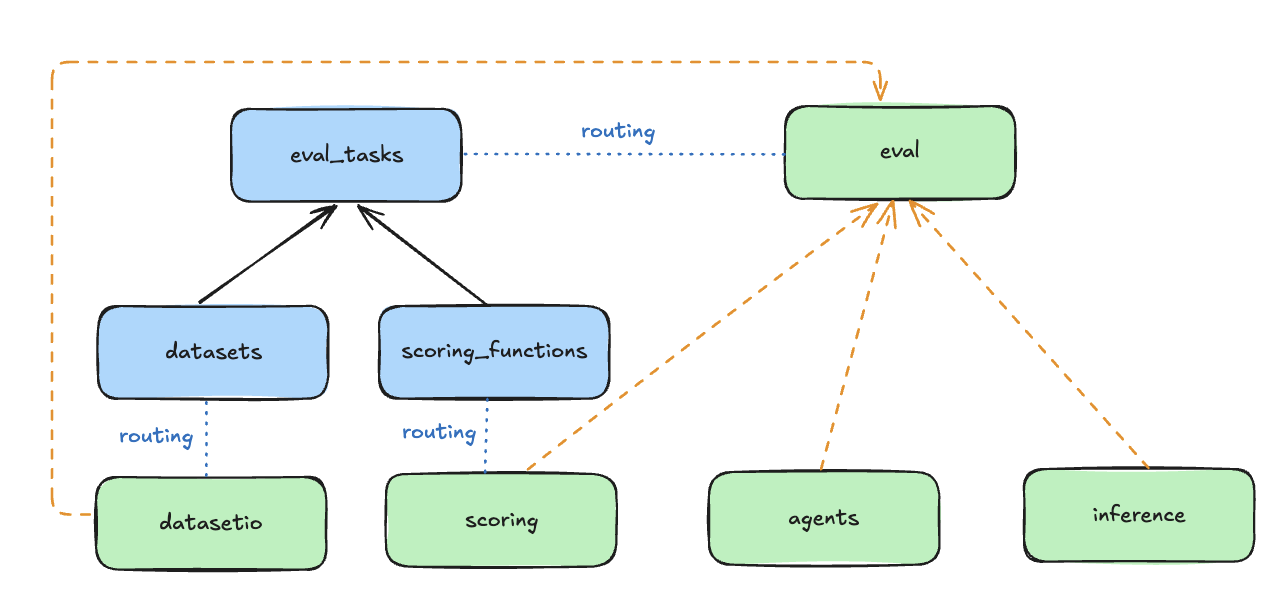

The Evaluation APIs are associated with a set of Resources as shown in the following diagram. Please visit the Resources section in our Core Concepts guide for better high-level understanding.

- DatasetIO: defines interface with datasets and data loaders.

- Associated with

Datasetresource.

- Associated with

- Scoring: evaluate outputs of the system.

- Associated with

ScoringFunctionresource. We provide a suite of out-of-the box scoring functions and also the ability for you to add custom evaluators. These scoring functions are the core part of defining an evaluation task to output evaluation metrics.

- Associated with

- Eval: generate outputs (via Inference or Agents) and perform scoring.

- Associated with

Benchmarkresource.

- Associated with

What's Next?

- Check out our Colab notebook on working examples with running benchmark evaluations here.

- Check out our Building Applications - Evaluation guide for more details on how to use the Evaluation APIs to evaluate your applications.

- Check out our Evaluation Reference for more details on the APIs.